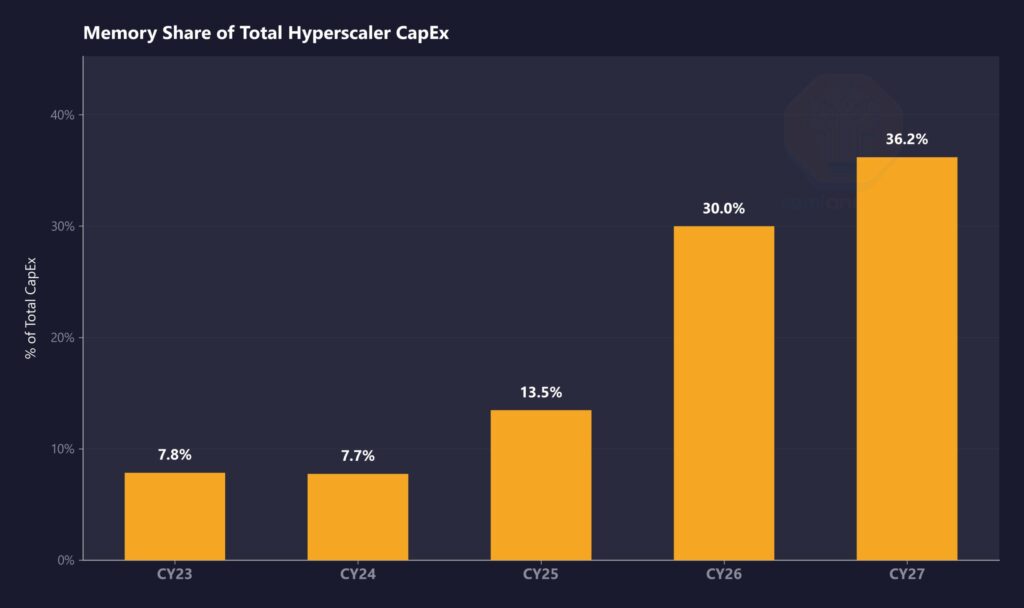

Memory costs now shape how hyperscalers plan and spend on infrastructure, and the shift has accelerated faster than expected as DRAM prices continue to rise across both contract and spot markets, pushing memory to take a much larger share of total capital expenditure while demand for AI systems keeps growing without slowdown.

Companies building large-scale AI infrastructure now face a situation where memory alone can account for a significant portion of total system cost, which changes how budgets get allocated across compute, storage, and networking.

SemiAnalysis highlights how quickly this change has taken place and how it continues to move upward as supply remains tight and demand keeps expanding across AI workloads.

“In CY23 and CY24, memory was ~8% of total Hyperscaler spend. We estimate it hits 30% in CY26 and moves higher in CY27. That’s a near-4x shift in just four years.” — SemiAnalysis

This rapid increase links directly to rising DRAM and LPDDR5 pricing, along with continued shortages in high-bandwidth memory that powers AI servers, which means hyperscalers have limited options and must absorb higher costs to maintain infrastructure growth.

Rising prices push memory to the center of AI spending

Memory pricing trends now influence almost every layer of AI infrastructure, from rack-scale systems to custom silicon deployments, since technologies like DDR5 and LPDDR5 have become standard across hyperscaler environments and require a steady supply at scale.

At the same time, HBM shortages continue to tighten availability for advanced AI servers, which increases overall system costs even before further price increases take effect.

SemiAnalysis outlines the key drivers behind this pricing pressure and explains how multiple factors continue to push memory costs higher across the market.

“DRAM prices are expected to more than double in CY26… LPDDR5 contract pricing up over 3x since 1Q25… HBM remains structurally undersupplied through CY27.” — SemiAnalysis

These conditions make memory one of the most expensive components in AI infrastructure, and they also explain why hyperscalers continue spending despite rising costs.

NVIDIA locks in supply advantage as competitors face pressure

NVIDIA sits in a stronger position within the supply chain, as it secured long-term agreements early and gained preferred access to DRAM supply, which allows the company to manage both pricing and availability more effectively than its competitors.

SemiAnalysis points to a specific supply chain dynamic that gives NVIDIA a clear edge in the current environment.

“NVDA receives VVP (Very Very Preferred) DRAM pricing, well below both hyperscalers and the broader market.” — SemiAnalysis

This preferred status reduces NVIDIA’s exposure to rising memory costs. It helps the company maintain pricing flexibility across its AI hardware. At the same time, competitors that lack similar access face higher costs and tighter supply conditions, which makes competing at scale more difficult as memory continues to dominate infrastructure spending.